・9 min read

【2022】Windows11+Docker+GPU環境を秒速構築

はじめに

タイトルにある通り,Windows11環境でDocker+GPUを使ってみようと思います.

WSL versionの確認

versionが2であることを確認します.

>wsl -l -v

NAME STATE VERSION

* kali-linux Running 2

docker-desktop-data Running 2

docker-desktop Running 2Docker imageのダウンロード

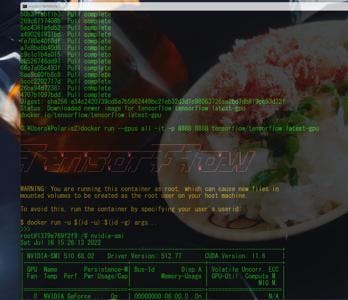

pullコマンドでダウンロードします.

>docker pull tensorflow/tensorflow:latest-gpu

latest-gpu: Pulling from tensorflow/tensorflow

d5fd17ec1767: Pull complete

50b37fabf1b7: Pull complete

269c6117408b: Pull complete

5ec4361a6d52: Pull complete

a490261931bd: Pull complete

fe780e40f0df: Pull complete

a7e8be5b40d6: Pull complete

c9c1c1b4a015: Pull complete

c8526746dd97: Pull complete

66c7a05c493f: Pull complete

8ae9c60fb8c8: Pull complete

5ccc2202717d: Pull complete

26ba94d72381: Pull complete

4707b1097bdd: Pull complete

Digest: sha256:a34c2420739cd5a7b5662449bc21eb32d3d1c98063726ae2bd7db819cc93d72f

Status: Downloaded newer image for tensorflow/tensorflow:latest-gpu

docker.io/tensorflow/tensorflow:latest-gpuDocker イメージの起動

>docker run --gpus all -it -p 8888:8888 tensorflow/tensorflow:latest-gpu

________ _______________

___ __/__________________________________ ____/__ /________ __

__ / _ _ \_ __ \_ ___/ __ \_ ___/_ /_ __ /_ __ \_ | /| / /

_ / / __/ / / /(__ )/ /_/ / / _ __/ _ / / /_/ /_ |/ |/ /

/_/ \___//_/ /_//____/ \____//_/ /_/ /_/ \____/____/|__/

WARNING: You are running this container as root, which can cause new files in

mounted volumes to be created as the root user on your host machine.

To avoid this, run the container by specifying your user's userid:

$ docker run -u $(id -u):$(id -g) args...

>>>CUDA ドライバーの確認

nvidia-smiコマンドの実行をしてCUDA ドライバーの確認をします.

root@1379e769f2f9:/# nvidia-smi

Sat Jul 16 15:26:13 2022

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 510.68.02 Driver Version: 512.77 CUDA Version: 11.6 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA GeForce ... On | 00000000:06:00.0 On | N/A |

| 0% 43C P8 13W / 175W | 3430MiB / 8192MiB | 10% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+tensorflowとGPUの確認

インポートしてtensorflowのversionを確認します.

root@1379e769f2f9:/# python3

Python 3.8.10 (default, Mar 15 2022, 12:22:08)

[GCC 9.4.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import tensorflow

>>> tensorflow.version

<module 'tensorflow._api.v2.version' from '/usr/local/lib/python3.8/dist-packages/tensorflow/_api/v2/version/__init__.py'>また,下記コマンドを実行

>>> from tensorflow.python.client import device_lib

>>> device_lib.list_local_devices()

2022-07-16 15:26:48.017885: I tensorflow/core/platform/cpu_feature_guard.cc:193] This TensorFlow binary is optimized with oneAPI Deep Neural Network Library (oneDNN) to use the following CPU instructions in performance-critical operations: AVX2 FMA

To enable them in other operations, rebuild TensorFlow with the appropriate compiler flags.

2022-07-16 15:26:48.317108: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:961] could not open file to read NUMA node: /sys/bus/pci/devices/0000:06:00.0/numa_node

Your kernel may have been built without NUMA support.

2022-07-16 15:26:48.339390: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:961] could not open file to read NUMA node: /sys/bus/pci/devices/0000:06:00.0/numa_node

Your kernel may have been built without NUMA support.

2022-07-16 15:26:48.339978: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:961] could not open file to read NUMA node: /sys/bus/pci/devices/0000:06:00.0/numa_node

Your kernel may have been built without NUMA support.

2022-07-16 15:26:49.263128: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:961] could not open file to read NUMA node: /sys/bus/pci/devices/0000:06:00.0/numa_node

Your kernel may have been built without NUMA support.

2022-07-16 15:26:49.263519: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:961] could not open file to read NUMA node: /sys/bus/pci/devices/0000:06:00.0/numa_node

Your kernel may have been built without NUMA support.

2022-07-16 15:26:49.263563: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1616] Could not identify NUMA node of platform GPU id 0, defaulting to 0. Your kernel may not have been built with NUMA support.

2022-07-16 15:26:49.263966: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:961] could not open file to read NUMA node: /sys/bus/pci/devices/0000:06:00.0/numa_node

Your kernel may have been built without NUMA support.

2022-07-16 15:26:49.264092: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1532] Created device /device:GPU:0 with 5967 MB memory: -> device: 0, name: NVIDIA GeForce RTX 2070, pci bus id: 0000:06:00.0, compute capability: 7.5

[name: "/device:CPU:0"

device_type: "CPU"

memory_limit: 268435456

locality {

}

incarnation: 16846278558184796431

xla_global_id: -1

, name: "/device:GPU:0"

device_type: "GPU"

memory_limit: 6256852992

locality {

bus_id: 1

links {

}

}

incarnation: 14404479112550200897

physical_device_desc: "device: 0, name: NVIDIA GeForce RTX 2070, pci bus id: 0000:06:00.0, compute capability: 7.5"

xla_global_id: 416903419

]

>>>無事にGPU(NVIDIA GeForce RTX 2070)が確認できました.

次回はPytorch版でやってみたいと思います.